OpenClaw

Personal AI assistant that runs on your own devices, works across many chat channels, supports voice on macOS / iOS / Android, includes Live Canvas, and can expand through ClawHub.

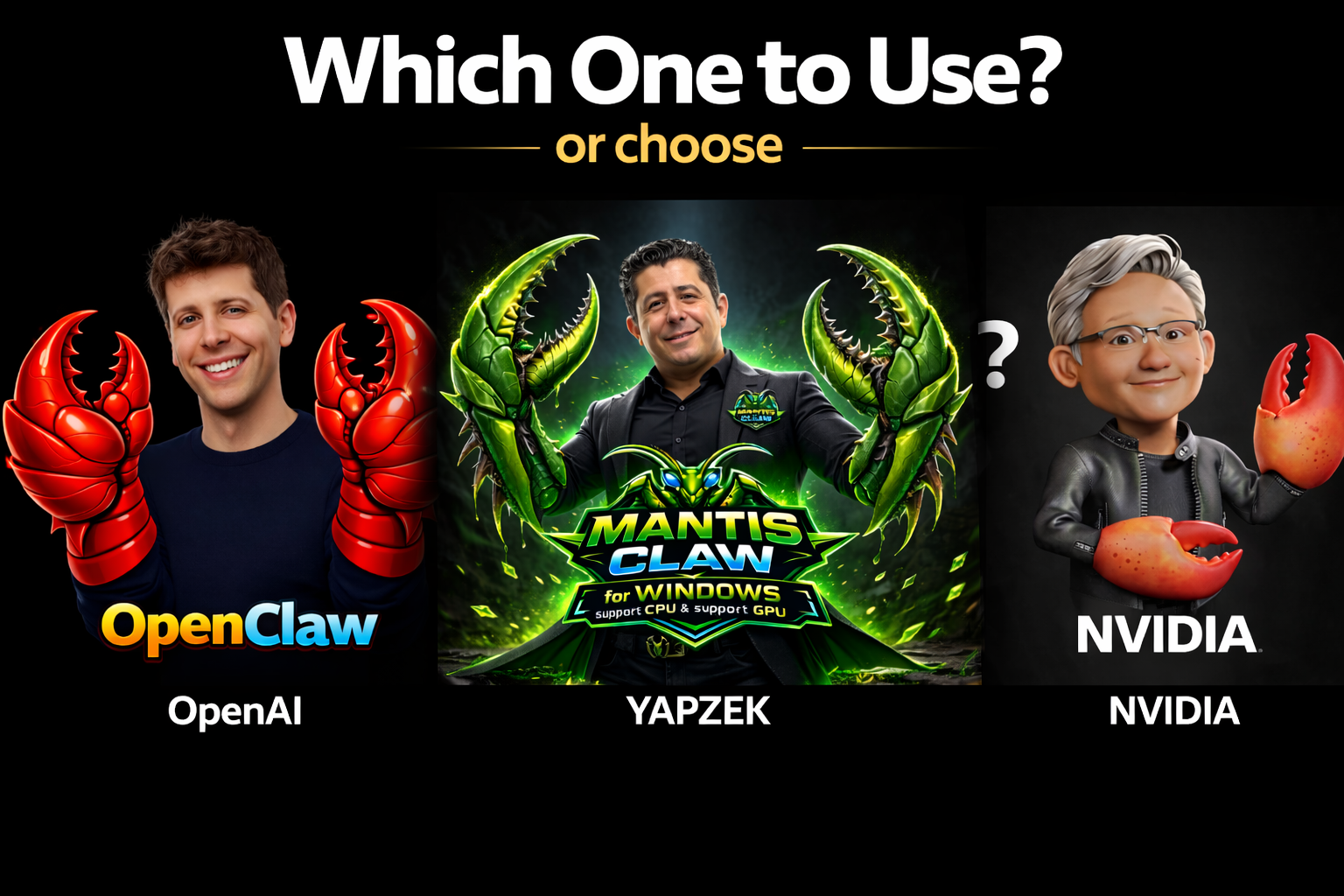

A detailed comparison of OpenClaw, MantisClaw, and NemoClaw, focused on positioning, architecture, channels, orchestration depth, automation capability, execution model, and security direction.

Personal AI assistant that runs on your own devices, works across many chat channels, supports voice on macOS / iOS / Android, includes Live Canvas, and can expand through ClawHub.

Multi-channel desktop AI orchestration platform with a 12-state workflow, deep browser automation, runtime Python code execution, MCP tools, advanced HTTP calling, and strong structured task handling.

NVIDIA’s secure OpenClaw deployment layer, centered around OpenShell sandboxing, controlled inference routing, and policy-based isolation for safer operation.

Column order updated to: OpenClaw → MantisClaw → NemoClaw

Black background • premium dark theme • responsive layout

| Category | OpenClaw | MantisClaw | NemoClaw |

|---|---|---|---|

| Main Positioning | Personal AI assistant you run on your own devices. | Multi-channel AI automation and orchestration platform with desktop-native execution (WhatsApp, Telegram, Slack, Built-in Chat, Blender Chat). | NVIDIA plugin / deployment layer for secure installation and operation of OpenClaw. |

| Primary Focus | Multi-channel communication, device integration, voice, and assistant usability. | Structured automation, orchestration depth, browser workflows, and runtime execution. | Sandboxed execution, policy control, and safer infrastructure. |

| Architecture Style | Gateway / control-plane style assistant with device and channel routing. | 12-state orchestrator with DAG-style execution and retry / replan cycles. | Plugin + blueprint + OpenShell sandbox lifecycle. |

| Runtime Stack | TypeScript / Node.js ecosystem with native device integrations. | Go + Python hybrid runtime. | OpenShell-based isolated runtime with NVIDIA-managed components. |

| Deployment Model | Self-hosted assistant stack across devices, channels, and clients. | Desktop-native deployment with single-binary orientation. | Onboarding, launch, status, logs, and sandbox management through CLI and OpenShell flow. |

| Messaging Channels |

Strong Broad channel coverage including WhatsApp, Telegram, Slack, Discord, Google Chat, Signal, iMessage, Teams, Matrix, LINE, Mattermost, IRC, and more. |

Multi-Channel Supports WhatsApp, Telegram, Slack, Built-in Chat, and Blender Chat. MCP tools and advanced HTTP tool calling extend integration capabilities. |

Indirect Not a standalone messaging platform by itself; depends on OpenClaw. |

| Voice Capability |

Yes Voice Wake and Talk Mode on macOS / iOS, plus continuous voice support on Android. |

Yes Whisper STT + OpenAI TTS voice pipeline. |

Indirect Inherits capability through OpenClaw rather than leading with voice as its own product layer. |

| Visual Workspace / UI |

Yes Includes Live Canvas / A2UI and device-facing UX. |

Desktop-oriented Positioned as a desktop-native product with embedded UI. |

Ops-oriented More focused on operational control than end-user visual workspace. |

| Orchestration Depth |

Moderate Strong routing and session-based assistant model, but not positioned as the deepest orchestration engine. |

High Public comparison emphasizes 12 states, multi-intent handling, retry / replan loops, and DAG execution. |

Not core focus Focuses more on controlled runtime than orchestration sophistication. |

| Browser Automation |

Present, but not the main differentiator Useful assistant capabilities exist, but broad messaging and device integration are more central to the pitch. |

Major strength Public comparison highlights Playwright-Go, anti-detection, media extraction, playbooks, and a browser vision loop. |

No standalone browser automation focus Security wrapper rather than browser-first automation product. |

| Tool / Skill Expansion |

Good ClawHub allows skill discovery and pulling new skills automatically. |

Very strong + MCP + HTTP Runtime Python generation for unlimited tooling, MCP Client + Server for extensible tool discovery (including Blender MCP), and advanced HTTP tool calling for dynamic REST API / webhook integration. |

Controlled Extensibility exists inside a more policy-controlled, sandboxed model. |

| Code Execution Model | Host assistant workflow with bundled / registry-based skills. | Go → Python execution path with persistent execution model highlighted on its comparison page. | OpenClaw runs inside an OpenShell sandbox with controlled environment boundaries. |

| Security Direction | Session-based assistant control and local / self-hosted orientation. | Public comparison emphasizes keeping settings / credentials out of the LLM prompt and injecting them at runtime. | Strongest security-centric story here: policy-enforced egress, filesystem boundaries, process controls, and controlled inference routing. |

| Sandboxing | Some isolation concepts exist, but sandboxing is not the main public identity. | Execution isolation exists, but the main public story is orchestration and automation depth. |

Core capability OpenShell sandbox controls network, filesystem, process behavior, and inference routing. |

| Best Fit | People who want a cross-channel, device-aware, personal AI assistant. | Teams or builders who want deep automation, structured orchestration, and powerful execution. | Users who want a more controlled and security-oriented way to run OpenClaw. |

| Simple Summary | Broadest assistant reach. | Deepest automation engine. | Safest controlled deployment layer. |

| Language |

TypeScript TypeScript + Swift (macOS) + Kotlin (Android). Large polyglot codebase across platforms. |

Go + Python Compiled Go binary for performance + Python for ML/data ecosystem. Hybrid IPC via JSON-RPC. |

Python Python-based CLI and orchestration layer wrapping OpenClaw in sandboxed containers. |

| Agent Count | 1 Pi agent (RPC) with multi-agent routing and session-based dispatch. |

10 Specialized Agents Intent, Planner, Config, CodeGen, Executor, Debugger, Validator, Artifact, Response, Browser — plus unlimited custom agents via my_agents. |

Inherits OpenClaw's agent model. No additional agent architecture of its own. |

| State Machine | Session-based queue modes. No formal multi-state orchestrator. |

12-State Orchestrator received → analyzing → planning → awaiting_config → ready → executing → debugging → validating → replanning → artifact → responding → completed/failed/blocked. |

Blueprint lifecycle (init → launch → running → stopped). Operational states, not task orchestration. |

| Parallel Execution |

Sequential Tasks processed sequentially within sessions. |

DAG Goroutines Actions run concurrently with dependency waiting. True parallel execution at the task level. |

Sequential Single sandbox execution model. |

| Self-Healing |

No Retry policies exist at session level, but no AI-driven error analysis and code fix loop. |

Yes — ExecuteWithSelfHeal() Python execution fails → AI reads traceback → fixes code → auto-installs missing packages → retries (up to 3 attempts). |

No Sandbox restart is the recovery mechanism, not AI-driven self-healing. |

| Browser Tool Count | ~7 tools (navigate, click, type, screenshot, snapshots). Browser is a supporting feature. |

12 Tools open, search, links, images, text, click, fill, screenshot, scroll, press, download_media, playbook. |

No standalone browser tools. Inherits whatever OpenClaw provides inside the sandbox. |

| Browser Anti-Detection |

No Standard CDP-based browser access without anti-bot measures. |

Full Suite navigator.webdriver=undefined, fake plugins/languages, IgnoreDefaultArgs, disable-blink-features, AddInitScript for chrome.runtime spoofing. |

N/A Not applicable — security wrapper, not a browser automation product. |

| LLM Vision Loop | Snapshot-based browser interaction. No formal multi-state vision loop. |

7-State Machine NAVIGATE → OBSERVE → THINK → ACT → EVALUATE → RECOVER → COMPLETE. Full autonomous browser vision cycle. |

Not applicable. |

| Playbook Engine |

No No scriptable playbook system for browser workflows. |

JSONL Playbooks Stepwise execution: goto, screenshot, send_llm, click, fill, select, scroll, wait, get_text — with {setting.x.y} credential injection. |

No Blueprint-based operational sequences, not browser playbooks. |

| Code Execution Details | Host exec + Docker sandbox for skills from ClawHub registry. |

Persistent Python Kernel Go→Python JSON-RPC subprocess. _kernel_globals persists variables & imports across calls. Pre-loaded: openpyxl, pandas, docx, pptx, requests, xlsxwriter. |

OpenShell sandbox with filesystem boundaries. Code runs inside container isolation. |

| Auto Package Install |

No Skills must be pre-installed or pulled from ClawHub. |

Yes Missing pip packages detected from ImportError → auto pip install → retry execution. |

Controlled Packages must be declared in blueprint. No runtime auto-install. |

| Skill System |

ClawHub Registry Community skill marketplace with bundled skills and install-from-registry flow. |

AI Self-Healing Skills LLM auto-creates skills → error detection → AI fix → retry. Skills discovered during execution are saved for reuse. |

Policy-Controlled Skills run inside sandbox boundaries with policy enforcement. |

| Credential Handling | Config file based. Credentials accessible to the runtime. |

LLM-Isolated Settings NEVER reach the LLM prompt. {setting.x.y} placeholders are resolved at runtime, injected into Python kernel globals only. |

Policy-Enforced Egress control and filesystem boundaries prevent credential leakage at infrastructure level. |

| Scheduled Tasks |

No Gateway daemon mode, but no user-facing cron/schedule system. |

Cron Engine Scheduled tasks run autonomously on cron intervals. Results auto-delivered to WhatsApp, Telegram, or Slack. |

No No scheduling system — focused on runtime control. |

| Custom Agents |

No Single Pi agent model. |

∞ Unlimited Create unlimited custom agents via my_agents with per-channel assignment. Each channel can map to a different agent persona. |

No Inherits OpenClaw's agent model. |

| Scenarios / Workflows |

No No scenario/workflow builder system. |

∞ Unlimited Scenarios Create unlimited automation scenarios via my_scenarios. Define complex multi-step workflows. |

Blueprints Blueprint YAML definitions control deployment workflow, but not task-level automation scenarios. |

| File Delivery |

Channel delivery Files sent through active chat channel. |

Multi-Channel Native Auto-detects generated files (Excel, Word, PDF, images) and sends them back to the originating channel/session (not limited to WhatsApp). |

Filesystem only Files stay inside the sandbox filesystem. |

| Memory Footprint | ~150-300 MB (Node.js runtime). |

~30-50 MB Compiled Go binary has the smallest footprint of any comparable product. |

Variable — depends on container and OpenClaw stack size. |

| Database | File-based (~/.openclaw/) local storage. |

Embedded PostgreSQL 37 tables. Full relational DB embedded in the single binary. No external server required. |

Depends on OpenClaw's storage inside the sandbox. |

| Multi-Intent |

Single session One intent per session interaction. |

Yes JSON array format allows multiple intents per single message. All processed in one orchestration cycle. |

N/A Not an intent-processing product. |

| Inference Control | Direct LLM API calls. User chooses provider. | Direct OpenAI / compatible API calls with 25-turn tool-call loop. |

Controlled Routing Policy-enforced inference routing. Can restrict which models are accessible and control egress. |

| Community Size |

318K ⭐ Massive open-source community with 1,231 contributors. |

Solo developer. Not yet public on GitHub. | NVIDIA-backed. Smaller community but enterprise credibility. |

| Debug / Logging | Log files. |

Per-Request JSONL reasoning/<requestID>.jsonl files with full timeline + artifact manifest for every execution. |

Structured Logs Sandbox status, launch logs, and operational monitoring through CLI. |